Catch post-deployment regressions from feedback

For when your deploys pass all tests but customers notice something broke.

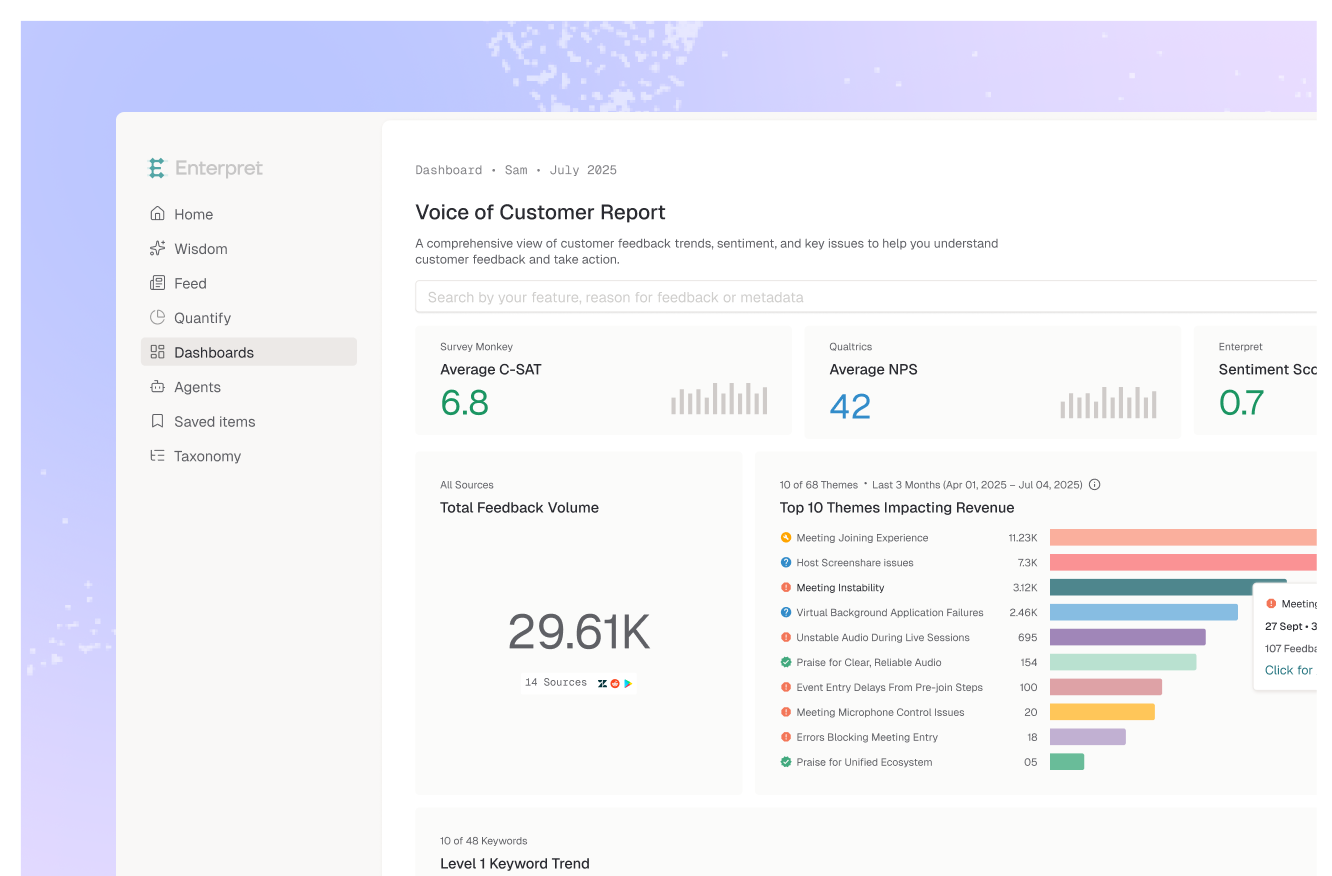

.webp)

How teams use Enterpret today

Situation

After a checkout flow update, internal monitoring showed no errors. But customers started reporting payment confusion within hours.

Action — asked Enterpret AI

Show me any feedback themes that spiked in the 48 hours after our last deployment on [date].

Impact

Wisdom confirmed the regression — customers described the exact behavior change. The team linked the spiking feedback theme to the deploy ticket in Jira or Linear, with 12 customer quotes and affected account count attached automatically. Reverted within hours.

Situation

An engineering team shipped a performance fix and wanted to confirm it helped from the customer's perspective.

Action — configured Quality Monitor agent in Slack

Quality Monitor agent tracks the [performance complaint] theme over 2 weeks post-fix. Alert if volume doesn't decline by 50%.

Impact

Feedback dropped 85% over 2 weeks — confirming the fix resolved the customer experience, not just the server metrics.

.webp)

Situation

After a deployment, an engineer noticed a quality monitor alert about "slow loading" complaints. They needed to investigate the specific customer-reported patterns.

Action - prompted Claude with Enterpret MCP connector

What customer complaints about performance or slow loading emerged since [deploy date]? Group by feature area and show the specific behaviors customers describe.

Impact

Found a latency regression in EU data centers within minutes — directly from customer descriptions in their terminal. Patched in the same sprint.