How to analyze customer feedback with AI?

AI can analyze customer feedback at a scale and consistency that manual processes can't match. The difference isn't just speed — it's what becomes visible when you're categorizing thousands of responses instead of sampling hundreds. Here is how AI-powered feedback analysis actually works, what it requires to produce reliable results, and where human judgment still belongs in the process.

What AI Feedback Analysis Actually Does

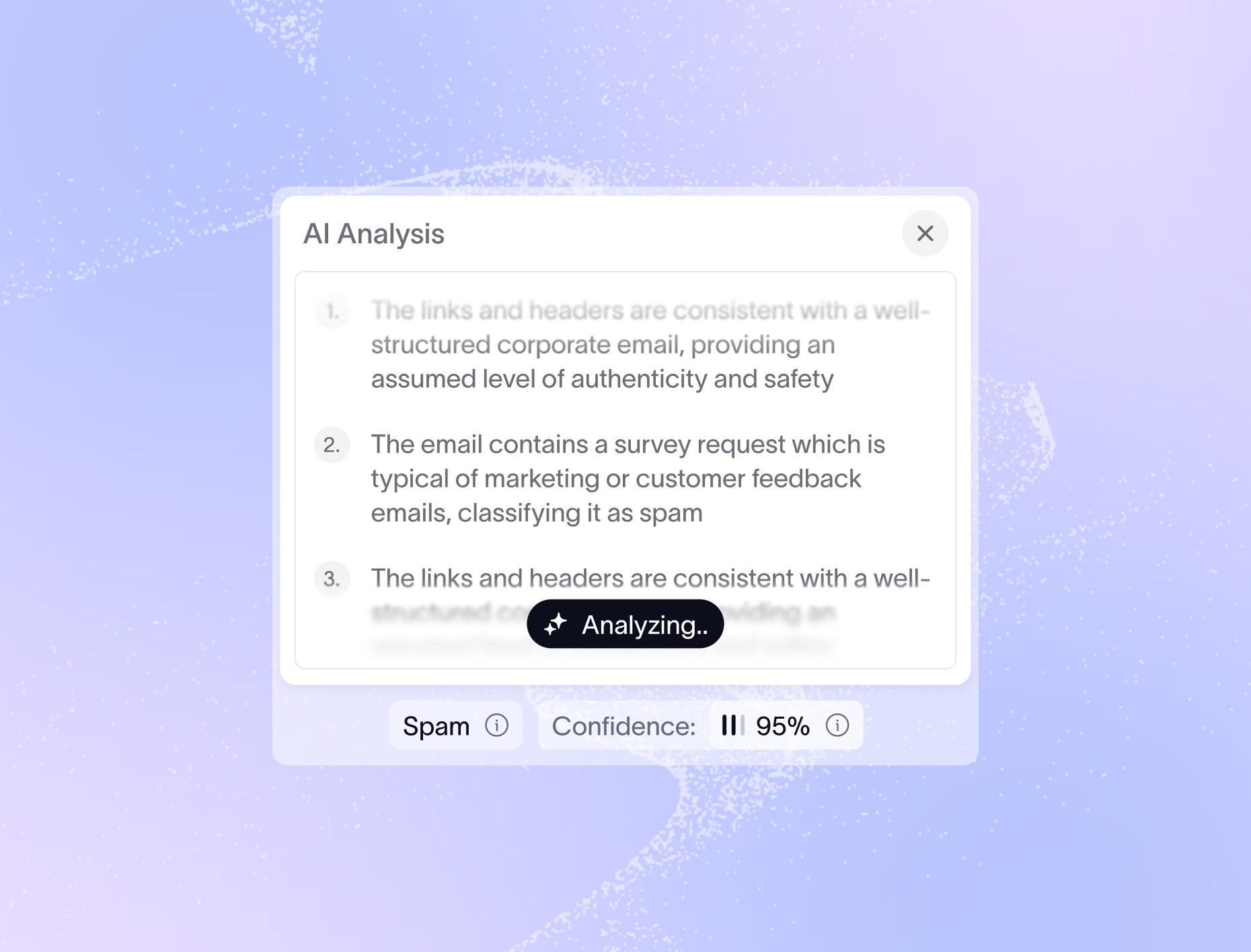

The term "AI analysis" gets applied to a wide range of things, from basic sentiment classification ("positive/negative/neutral") to large language models summarizing transcripts. Understanding what's happening under the hood matters, because the quality of your analysis depends on it.

At the useful end of the spectrum, AI feedback analysis does three things. It reads unstructured text — support tickets, NPS verbatims, review site comments, sales call transcripts — and categorizes it against a defined taxonomy. It does this consistently, applying the same logic to the ten-thousandth ticket that it applied to the first. And it does it at volume, making it possible to process the full body of feedback rather than a sample.

What AI doesn't do, on its own, is understand your business. A general-purpose model reading customer feedback about "the dashboard" doesn't know whether your dashboard is a core product feature or a secondary reporting view, whether "dashboard" is customer language for three different things, or which complaints about it are actually about a different part of the product. That context has to be built in.

What You Need Before AI Analysis Can Work

Most teams that get poor results from AI feedback analysis have a setup problem, not a technology problem. Three inputs determine output quality.

Start with your taxonomy. If it labels everything as "Product," "Support," and "Billing," AI classification will fill those buckets and tell you almost nothing useful. A taxonomy that maps to your actual product areas, specific features, and customer outcomes produces analysis you can act on. The more specific the taxonomy, the more specific the insight.

Training data matters almost as much. Customers don't use your internal vocabulary — they describe features by what they do, not what you call them, and they name problems in terms of their workflow, not your architecture. AI models validated against real feedback from your customer base outperform general-purpose classifiers on every metric that counts: precision, recall, and whether the results actually change decisions.

The third input is customer context. Raw feedback categorized at scale tells you what customers are saying. Feedback enriched with ARR, plan type, tenure, and product usage tells you who is saying it and what it's worth. The enrichment step is what converts categorized data into business decisions.

What AI Makes Possible That Wasn't Possible Before

Once the setup is right, AI analysis changes what your team can see and how fast they can see it.

Coverage goes from partial to complete. Manual review of 10,000 monthly support tickets is a full-time job for multiple analysts. AI processes the same volume in minutes — meaning every ticket, every verbatim, every call transcript informs your understanding of what customers are experiencing, not a sample of it.

Trend detection becomes continuous rather than quarterly. When AI is categorizing feedback in real time, you can see a theme growing before it becomes a crisis. A complaint that appears in 2% of tickets in January and 6% in February is a signal worth acting on. Quarterly analysis finds it in April.

Cross-source synthesis becomes possible. Connecting the same theme across support tickets, NPS verbatims, and sales call transcripts manually requires coordination between multiple teams and usually doesn't happen. AI applied across all feedback sources surfaces patterns that would otherwise stay siloed — the same onboarding friction driving support tickets also showing up in deal losses and churn surveys.

Where Human Judgment Still Belongs

AI feedback analysis works best when it handles classification and pattern detection, and humans handle interpretation and decision-making. Two places where human judgment is irreplaceable.

Taxonomy design and maintenance. AI will classify feedback against whatever taxonomy you give it. If the taxonomy has gaps, the AI has gaps. If a new product area launches and isn't reflected in the taxonomy, feedback about it gets misfiled. Keeping the taxonomy current is a human responsibility, and it needs a regular review cadence — quarterly at minimum.

Acting on what the data says. AI can tell you that complaints about report-sharing grew 40% last quarter among enterprise customers. It can't tell you whether to ship a feature, fix a workflow, or update your onboarding. That decision requires business context, stakeholder input, and judgment about tradeoffs. The analysis narrows the question. The team still has to answer it.

What to Look for When Evaluating AI Feedback Tools

Not all AI feedback analysis tools are built the same way. Four capabilities separate tools that produce reliable insight from tools that produce impressive-looking dashboards with questionable data underneath.

Does it learn your specific language, or apply a general model? Generic models produce generic results. A tool that adapts to your product vocabulary, your customer terminology, and your taxonomy will outperform a general-purpose classifier on the feedback that actually matters to your business.

Can you see how it's categorizing? Black-box AI is hard to trust and harder to improve. The ability to inspect individual categorizations, understand why a ticket was filed under a particular theme, and correct mistakes is what lets you improve accuracy over time.

Does it handle multiple feedback sources, or just one? If the tool only processes support tickets, you're still running separate analyses for NPS, reviews, and sales calls. The synthesis across sources is often where the most valuable patterns live.

How does it handle taxonomy changes? Products evolve, customer vocabulary shifts, and business priorities change. A tool that requires re-categorizing your entire historical data set every time your taxonomy changes creates a fragility that limits how useful the analysis stays over time.

Where Enterpret Fits In

Enterpret is built for exactly this problem. Its AI learns your specific product language and customer vocabulary, applying an adaptive taxonomy that updates as your product and business evolve. Feedback from every source — support tickets, NPS verbatims, sales call transcripts, review sites, in-app surveys — is categorized in one place, enriched with customer attributes, and surfaced as trends your team can act on. Every categorization is inspectable, and the taxonomy can be updated without losing your historical analysis.

See how Enterpret analyzes customer feedback with AI.

Heading

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

Frequently Asked Questions

AI feedback analysis reads unstructured text — support tickets, NPS verbatims, review comments, call transcripts — and classifies it against a defined taxonomy of categories. It applies that classification consistently at volume, making it possible to analyze every piece of feedback rather than a sample. The key distinction from manual analysis isn't speed alone — it's that patterns become visible at a scale where they were previously invisible.

Three inputs determine output quality. A specific taxonomy — one mapped to your actual product areas and customer outcomes, not generic categories like "Product" and "Support." Training data that reflects how your customers actually write, not your internal vocabulary. And customer context enrichment: ARR, plan type, tenure, and usage attached to each record so that frequency and severity are always visible in the context of business impact.

What does AI make possible that manual feedback analysis cannot?

Two places are irreplaceable. Taxonomy design and maintenance: AI classifies feedback against whatever taxonomy you give it, so if the taxonomy has gaps or doesn't reflect new product areas, the AI has those same gaps. Keeping the taxonomy current is a human responsibility. And acting on what the data says: AI can tell you that complaints about report-sharing grew 40% last quarter. It can't tell you whether to ship a feature, fix a workflow, or update onboarding. That judgment call belongs to the team.

Four capabilities matter most. Does it learn your specific product vocabulary, or apply a general model? Can you inspect how individual records are being categorized? Does it handle multiple feedback sources, or only one channel? And how does it handle taxonomy changes — does updating a category require re-categorizing your entire historical dataset? Tools that fail on any of these produce dashboards that look useful but carry compounding errors underneath.

A feedback taxonomy is the structured set of categories that AI uses to classify customer feedback. It's the organizing logic beneath all your labels and themes. A taxonomy mapped to your actual product areas and customer outcomes produces analysis you can act on. A generic taxonomy — or one that doesn't reflect how customers actually describe their experiences — produces fast noise. The quality of your AI analysis is a direct function of the quality of the taxonomy underneath it.