An NPS score of 4 doesn't tell you anything actionable. The 12-word verbatim under it tells you everything.

The score says a customer is unhappy. The verbatim tells you whether they're 60 days from cancelling or just venting about a flaky integration they'll forget about by Friday. Identifying churn risk from NPS verbatims means analyzing the language patterns in detractor and passive comments — switching language, missing-feature mentions, time-bound complaints, integration breakdowns, and support escalation references — and intervening within a 48-hour window before the customer's frustration calcifies into a renewal decision.

The short answer: tag every detractor and passive verbatim against a 5-pattern churn taxonomy (switching language, missing feature, time-bound complaint, integration breakdown, support escalation), route the matches to a CSM playbook with a 48-hour SLA, and confirm the signal against usage data before the renewal conversation.

Why the score is a lagging indicator and the verbatim is a leading one

NPS as a number is a snapshot of a feeling at a point in time. By the time a customer drops from a 9 to a 4, the underlying churn decision has usually already been made — they've evaluated alternatives, pulled a competitor into a pilot, or had the budget conversation with finance. The score is the receipt, not the diagnosis.

The verbatim is different. The verbatim is the customer telling you, in their own words, the chain of cause and effect that led to the score. Detractor scores have roughly 60-90 day lead times to churn. Detractor verbatims that contain switching language have lead times closer to 30-45 days. The shorter the window between signal and decision, the more important it is to read the words, not the digits.

This is the central inversion you have to internalize: a 6 with the verbatim "we're piloting [competitor] next month because your reporting is too rigid" is a higher churn risk than a 3 with the verbatim "the dashboard loaded slowly today and I'm in a bad mood." Same NPS bucket, completely different intervention.

The 5 verbatim patterns that predict churn

After tagging tens of thousands of NPS verbatims across B2B SaaS cohorts, the patterns that actually correlate with renewal loss collapse into five categories. Treat this as a starting taxonomy, not a finished one — your product, segment, and contract structure will shift the weights — but the categories themselves are durable.

1. Switching language

Any verbatim that mentions a competitor by name, references "evaluating alternatives," "looking at other vendors," "POCing," or "running a pilot with" is the highest-precision churn signal in the entire dataset. In our internal benchmarking, accounts with a single switching-language verbatim in the last 90 days churn at roughly 3-4x the base rate for their segment.

This pattern is rare — most detractors don't volunteer competitive intel — which is why it's so valuable when you find it. Don't dilute it by lumping it in with general complaints.

2. Missing-feature mentions

Verbatims that name a specific capability the customer needs and your product doesn't have: "we'd love to see X," "the only thing missing is Y," "it would be a 9 if you had Z." On the surface this looks like product feedback. In the churn context, it's a measurement of workflow incompleteness — the customer is telling you they're routing around your product to get a job done, and every workaround is a foothold for a competitor.

The signal strengthens when the same missing feature appears across multiple users in the same account, or when the verbatim is paired with switching language.

3. Time-bound complaints

"Renewal is coming up and we're not sure." "Q3 budget review is next month." "Our contract is up in November." Time-bound language is the customer doing your churn forecasting for you. Anything with an explicit date or fiscal milestone in the verbatim should be treated as a tier-one save play with the urgency dialed to match the timeline they named.

4. Integration breakdowns

"The Salesforce sync keeps failing." "We can't get the Snowflake connector to work reliably." Integration verbatims look operational, but the underlying risk is structural: when your product stops being trustworthy as a system of record or a system of action inside the customer's stack, you become optional. Optional tools get cut at renewal. Integration complaints in the last 60 days predict churn at roughly 2x base rate, and they tend to compound — one broken integration usually surfaces a second.

5. Support escalation references

Verbatims that reference a ticket number, a long-running issue, a slow response, or a CSM/support handoff that "went nowhere" capture the moment a customer transitioned from "this product has a bug" to "this vendor doesn't have its act together." That's a fundamentally different category of frustration. The first is a product gap; the second is a relationship gap, and relationship gaps are what actually drive non-renewal decisions in mid-market and enterprise.

The 48-hour intervention window

The hypothesis behind the 48-hour SLA is simple: customer frustration is most malleable in the hours immediately after they articulate it. The act of writing a churn-risk verbatim is itself a request for response. Wait a week and you've confirmed their suspicion that you don't care.

The mechanics break down into four moves:

Within hours of survey submission, classify the verbatim against the 5-pattern taxonomy. Manual triage doesn't scale — this has to be automated.

Pull the account's MRR, contract end date, usage trend, and prior support history. The verbatim is the trigger; the enrichment is the context that determines play selection.

Send to the CSM with a structured payload: pattern matched, snippet of the verbatim, recommended play, and a hard 48-hour clock. Don't let it sit in a generic inbox.

Outreach happens in 48 hours. The outcome — saved, escalated, lost — gets written back to the account record so you can measure the program's save rate, not just its activity rate.

The save rate metric is the one that matters. Activity volume tells you the team is busy. Save rate tells you the program works. Target: at least 30-40% of churn-risk verbatims neutralized within 30 days, measured by sentiment shift in subsequent surveys, support sentiment, and renewal outcome. If you're below 20%, the bottleneck is usually routing speed or play selection, not detection.

Combining NPS verbatims with usage data to confirm churn risk

The verbatim alone is a hypothesis. The usage data is what either confirms or kills it. A detractor verbatim from a power user with daily logins and growing query volume is often a passionate customer venting about a real friction point — high save probability. A detractor verbatim from an account with declining DAU, no executive sponsor logging in for 30+ days, and a stalled integration is a structural churn risk that no amount of CSM charm will fix.

The two data layers worth joining at the verbatim level:

- Engagement decay: 30-day and 90-day trend on weekly active users, query volume, and feature breadth. A verbatim plus a flat-or-declining engagement trend is a tier-one signal.

- Stakeholder coverage: who from the account is actually using the product, especially economic buyers and technical champions. Verbatims from the economic buyer carry 4-5x the weight of verbatims from end users.

This is also where the broader category of feedback signals that indicate churn risk comes in — support tickets, community posts, sales call notes, win/loss interviews. The NPS verbatim is the most structured of these signals, but it isn't the only one, and a real churn-prevention program treats them as a single graph.

Building a churn-risk verbatim taxonomy your CSMs can act on

The 5 patterns above are the starting point. The work of operationalizing them is building a taxonomy that's specific enough for a CSM to act on without ambiguity, and adaptive enough to learn new patterns as your product, market, and customer base evolve.

A taxonomy built once and frozen will start to drift the moment your product changes. New features create new failure modes; new competitors create new switching language; new segments surface new time-bound complaints. The taxonomy needs to evolve with the data.

This is where Enterpret sits in the workflow. Enterpret's adaptive taxonomy auto-clusters verbatims into themes that update as new feedback arrives, so the "missing feature" and "integration breakdown" buckets aren't static keyword lists — they reshape themselves as customer language shifts. Every verbatim is then linked to the account record through the customer context graph, so a CSM looking at a flagged verbatim sees the contract value, renewal date, usage trend, and prior themes from that account in one place. The handoff to action runs through close the loop workflows, which route the verbatim into Slack or the CRM with the recommended play attached.

The point isn't the tooling — the point is that detection, enrichment, and routing all need to live in the same system. If your taxonomy lives in one tool, your account context lives in another, and your CSM workflow lives in a third, the 48-hour SLA becomes structurally impossible. That's the operational gap most teams underestimate when they try to build this in-house. Analyzing NPS verbatims at scale is a different problem than reading them one-at-a-time, and the difference is exactly where the taxonomy and graph have to do the work.

For teams evaluating tooling, a separate breakdown of proactive churn prevention tools covers the broader landscape. The taxonomy work is the same regardless of vendor.

What happens when you ignore the verbatim (concrete example)

Account A. NPS 6, verbatim: "We're going to evaluate [competitor] in Q3 because the reporting layer hasn't been able to keep up with our analytics team's needs." No 48-hour outreach. CSM saw the score, not the words. Renewal call 75 days later: "We've decided to go in a different direction."

Account B. Same NPS bucket, same segment. Verbatim flagged inside 18 hours with switching-language tag. Solutions architect on a call by day three. Two-week scoping of a custom reporting solution. Account renewed and expanded by 40% the following quarter.

The score-only view treated these as identical accounts. The verbatim view treated them as completely different problems with completely different plays. This is the entire argument compressed into one comparison: the score is the alarm, but the verbatim is the diagnosis, and you can't run a save play on an alarm.

Frequently Asked Questions

Q

What's the difference between NPS score and NPS verbatim for predicting churn?

The score is a lagging indicator — by the time it drops, the customer has usually already moved through the early stages of a churn decision. The verbatim is leading: it captures the cause-and-effect language that explains why the score is low, often weeks before the customer formalizes a renewal call. Acting on the score alone wastes the lead time the verbatim was giving you.

Q

How quickly should you act on a churn-risk verbatim?

The target is a 48-hour intervention window from survey submission to CSM outreach. Customer frustration is most malleable in the hours immediately after it's articulated, and the act of submitting a verbatim is itself an implicit request for response. Programs that exceed 5-7 days on first touch see significantly lower save rates.

Q

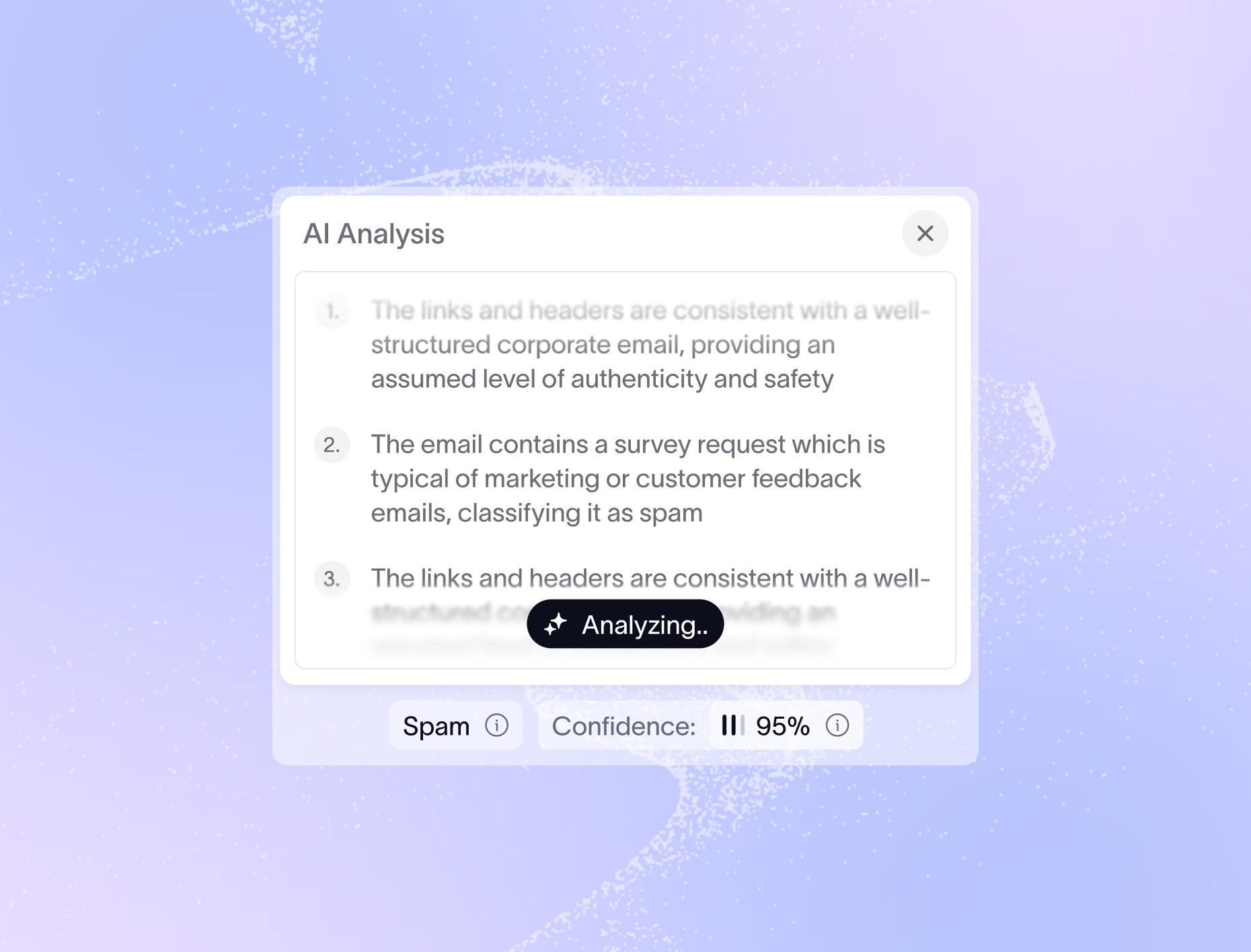

Can AI reliably identify churn-risk patterns in NPS comments?

For high-precision categories like switching language and time-bound complaints, modern LLM-based classifiers reach 90%+ accuracy with the right taxonomy and a few hundred labeled examples. The harder work is keeping the taxonomy current as language shifts — which is why an adaptive system that re-clusters verbatims as new feedback arrives outperforms a static keyword model over a 12-month horizon.

Q

What if a passive (score 7-8) leaves a churn-risk verbatim?

Treat it as a tier-one signal. Passives with switching language or time-bound complaints often churn at higher rates than detractors, because they're already past the venting stage and into pragmatic evaluation. The score-only worldview ignores this segment entirely; the verbatim worldview catches it.

Q

How does NPS verbatim analysis fit into a broader churn prevention program?

NPS verbatims are one node in a larger feedback graph that also includes support tickets, community posts, sales call notes, and win/loss interviews. The verbatim is the most structured signal because it's tied to a score and a moment in time, but a mature program correlates all of them — same account, same theme, multiple channels — to separate noise from real renewal risk.

Next step

If your team is moving from score-watching to verbatim-acting, the first sprint is taxonomy and routing — get the 5 patterns defined, classify the last 90 days of verbatims against them, and stand up a 48-hour SLA with one CSM as the pilot owner. Measure save rate, not activity. Iterate the taxonomy from there.

Teams looking to operationalize this end-to-end can book a demo to see how Enterpret combines adaptive taxonomy, the customer context graph, and close-the-loop workflows into a single verbatim-to-action pipeline.