Your AI Isn't Reasoning. It's Navigating.

90% of your token budget burns before the thinking starts. I have the receipts.

Here are two outputs from the same AI model analyzing the same customer data.

Output A:

"Performance is the #1 issue across channels. Education and Enterprise segments are most affected. Community sentiment is mostly neutral with some vocal negativity (est. 5-13% negative). Recommend investing in performance optimization and user education."

Output B:

"Performance & Reliability: 1,510 negative insights across 97 accounts representing $237.5M in ARR. High-churn accounts disproportionately affected: Acme Corp ($1.5M ARR, CSAT 2.72), Meridian Media ($1.2M, CSAT 2.60), Lattice ($850K, CSAT 2.54). $3.55M at immediate risk."

The first tells you what's broken. The second tells you who to call Monday morning.

Same model. Same data. Same prompts. I ran this analysis 16 times and recorded everything: every token, every tool call, every dollar. Here's what I found.

16 runs, one variable

I took 25,000 real feedback records (support tickets, survey responses, call transcripts, community posts) and ran them through two conditions. In one, Claude got raw CSV exports, the way most teams work today. In the other, Claude accessed a Customer Context Graph: the same records, structured into a queryable taxonomy with account-level connections and trained sentiment.

16 runs across four personas (VP Product, Head of CX, UX Researcher and VoC Program Manager). Four runs per persona to account for variance. Every prompt specific for each persona and the dataset.

One constraint made everything else predictable: the raw dataset was 39 MB, roughly 10 million tokens. Claude's context window holds 1 million. The model could see about 10% of the data at any given time. So it samples. Opens some files, skips others, searches for keywords, builds a narrative from whatever it found first. Run the same prompt Tuesday and Thursday, you get different emphasis, sometimes opposite conclusions. The output reads with equal confidence both times.

121 tool calls before a single insight

The surprise wasn't the quality gap. It was being able to watch exactly where the intelligence went, call by call.

In the raw condition, I watched Claude make 121 tool calls on a single analysis. Here's what they looked like:

The model opens a CSV. Reads the headers. Doesn't find what it needs, so it runs a keyword search via bash. Counts matches. Opens another file. Re-reads headers it already scanned because the context window rolled past them. Computes averages in bash. Searches for a different keyword. Opens the same file a third time. Writes a summary of a subset. Realizes the subset might not be representative. Opens more files.

24 minutes of navigation before it starts synthesizing.

Roughly 80% of the token budget went to this kind of work: opening, searching, counting, re-reading. Only 10% went to reasoning. Out of ~126,000 tokens consumed, the model spent 12,600 actually thinking about customers. The rest was overhead.

The structured condition told a different story. No file navigation. No keyword searches. No re-reading. The model went straight to synthesis. 45% of a smaller total budget (61,000 tokens) went to reasoning, 27,400 tokens of actual thinking. More than double the raw condition in absolute terms, at half the total cost.

That's not analysis versus better analysis. That's someone sorting mail versus the same person doing the work they were hired for.

Confident, specific, and wrong

If this were only about depth, you could live with it. Shallower insights, less specificity, but directionally correct. Fine.

It's not directionally correct.

Claude analyzed community sentiment from raw data and estimated negativity at 5-13% based on keyword frequency. The Adaptive Taxonomy, trained on the actual domain, measured 40.1%.

That's a 3-8x misread. And it flips the decision. The keyword estimate says: community is mostly neutral, low priority. The trained measurement says: community is your most polarized channel and probably your most important early warning system. One conclusion deprioritizes community monitoring. The other makes it the first thing a VP checks every morning.

Both outputs present with the same confidence. Both read like analysis. The prose doesn't degrade when the reasoning is shallow. That's the trap. The quality of the writing masks the quality of the thinking. If you missed last week's piece on why your AI sounds smarter than it is, this is the data behind it.

Customer language makes it worse. "Just here to watch the dumpster fire" contains zero negative keywords. "I'm glad people are switching to …" reads as positive by word count. "Doing great work... for their shareholders" is sarcasm that sails right through a grep search. Keyword matching treats language as literal. Your customers don't speak literally.

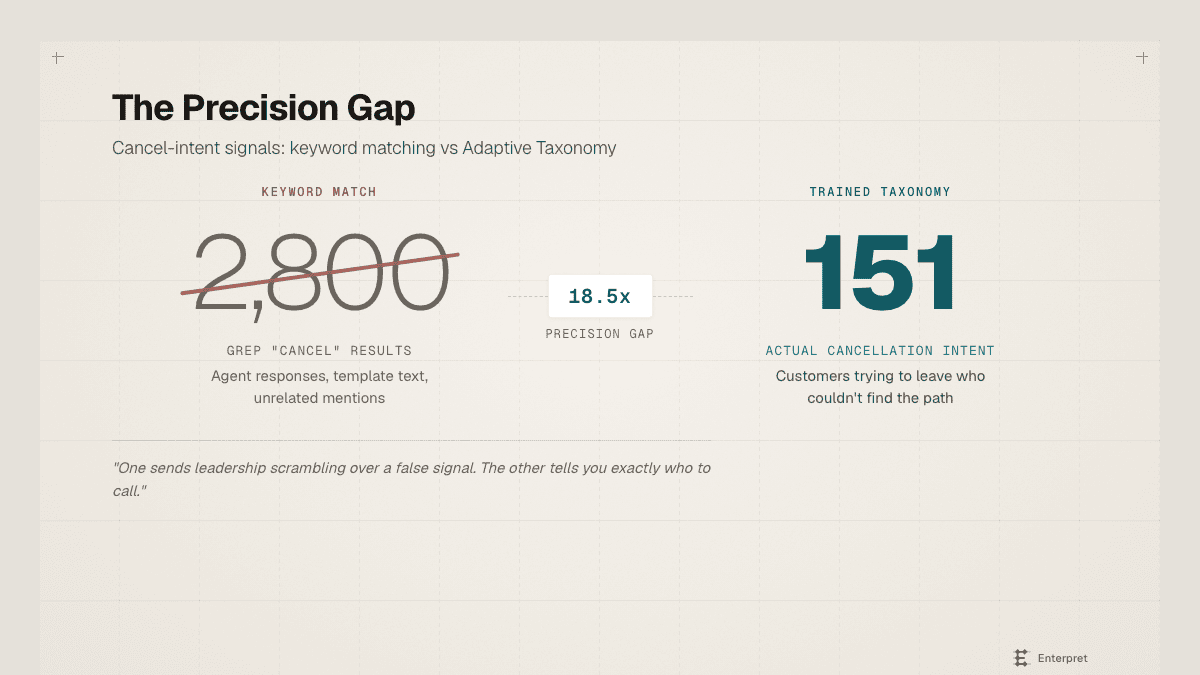

The cancel-intent example sharpens the point. Searching raw tickets for "cancel" returned 2,800 lines: agent responses ("I've cancelled the duplicate charge"), template text, unrelated mentions. It looks like a crisis. The structured taxonomy identified 151 actual cancellation-intent insights: customers trying to leave who couldn't find the path.

An 18.5x precision difference. One number sends leadership scrambling over a false signal. The other tells you exactly who is stuck, where in the flow, and what their accounts are worth.

The drift nobody measures

Three times faster. Half the cost. 48% higher quality. More than double the reasoning depth.

There is no trade-off here. The structured condition won on every axis, including cost. I expected better results. I didn't expect them to be cheaper.

But the number that should keep you up at night isn't the per-analysis cost. It's the compounding.

Every analysis your team runs against raw exports pays the same tax. Every week. You're building strategy, quarter after quarter, on a model that saw 10% of your data and got sentiment wrong by a factor of three to eight. That's not one bad report. That's a drift. Between what you think you know about your customers and what is actually true. And the drift is invisible, because the prose reads beautifully every single time.

The industry conversation is about which model to use. That conversation is about the last 20% of the problem. The first 80% was decided before the model saw its first token, by whether someone structured the context or just dropped in a file.

The difference is invisible in the output. It is only visible in the decisions.

If you want to see what this looks like when you fix the context layer: enterpret.com/claude-pilled